Artificial Compute

In early 2026, Software-as-a-Service (SaaS) stocks slid by 4%, wiping out $1 trillion in market capitalization. Analysts attribute the movement to a reaction in the market to the notion that, “software is solved.” Products like Anthropic’s Claude Cowork sparked ideas that artificial intelligence’s next horizon will commoditize the SaaS industry because a service can be replicated with a simple prompt (and perhaps a test harness; see slopfork).

There may be a situation now where the ability to make stuff exceeds the capacity to consume stuff. (Friedberg, All In, 2-27-26)

Despite the initial panic, the narrative has started to self-correct. The idea of replacing an expensive vendor agreement with Salesforce with an in-house, vibe-coded MVP seems unlikely. Partnering with a SaaS company like Salesforce provides broader integrations, reliability, and support that are not trivial to replicate and maintain. The Service component in SaaS is more valuable than the Software.

The market reaction still demonstrated the paranoid worship for artificial intelligence. Just the thought of its impact on an industry can send securities tumbling. Everyone seems to think AI will disrupt and transform our daily lives, jobs, and the global economy. Others have pointed to unit economics of AI that seem problematic. My view is that a transformation is happening and will continue to happen, but it’s not being driven by advances in Large Language Models.

unsplash.com/@omilaev

unsplash.com/@omilaev

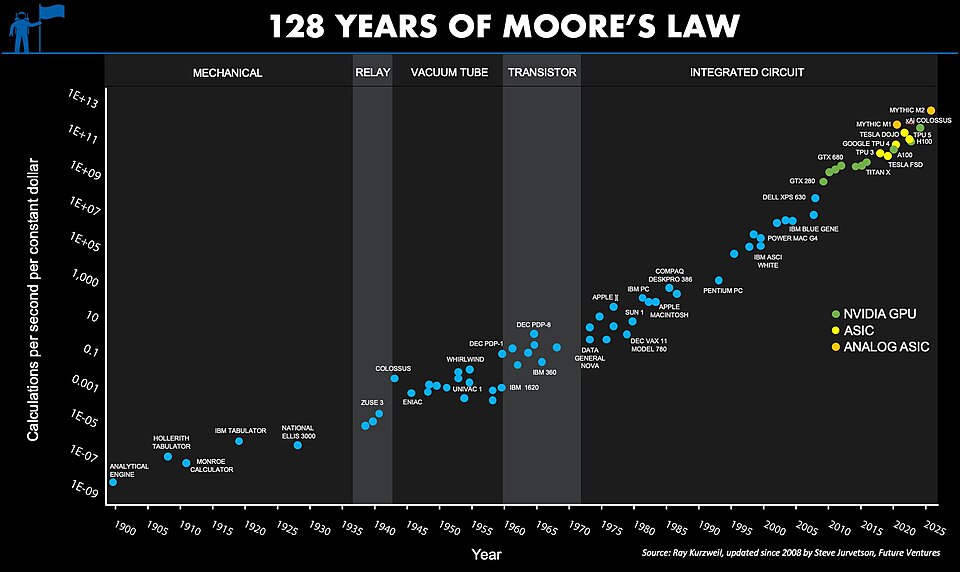

I believe Generative AI advances are a small, predictable part of a larger and older story, which I will call the Compute Quality Value Gap. My theory is based on Moore’s Law.

The number of transistors on a chip doubles every 24 months

The price per transistor decreases proportionally. Computers get more advanced, and compute becomes cheaper, in theory. This theory seems to have held up relatively well for half a century.

Wikipedia

Wikipedia

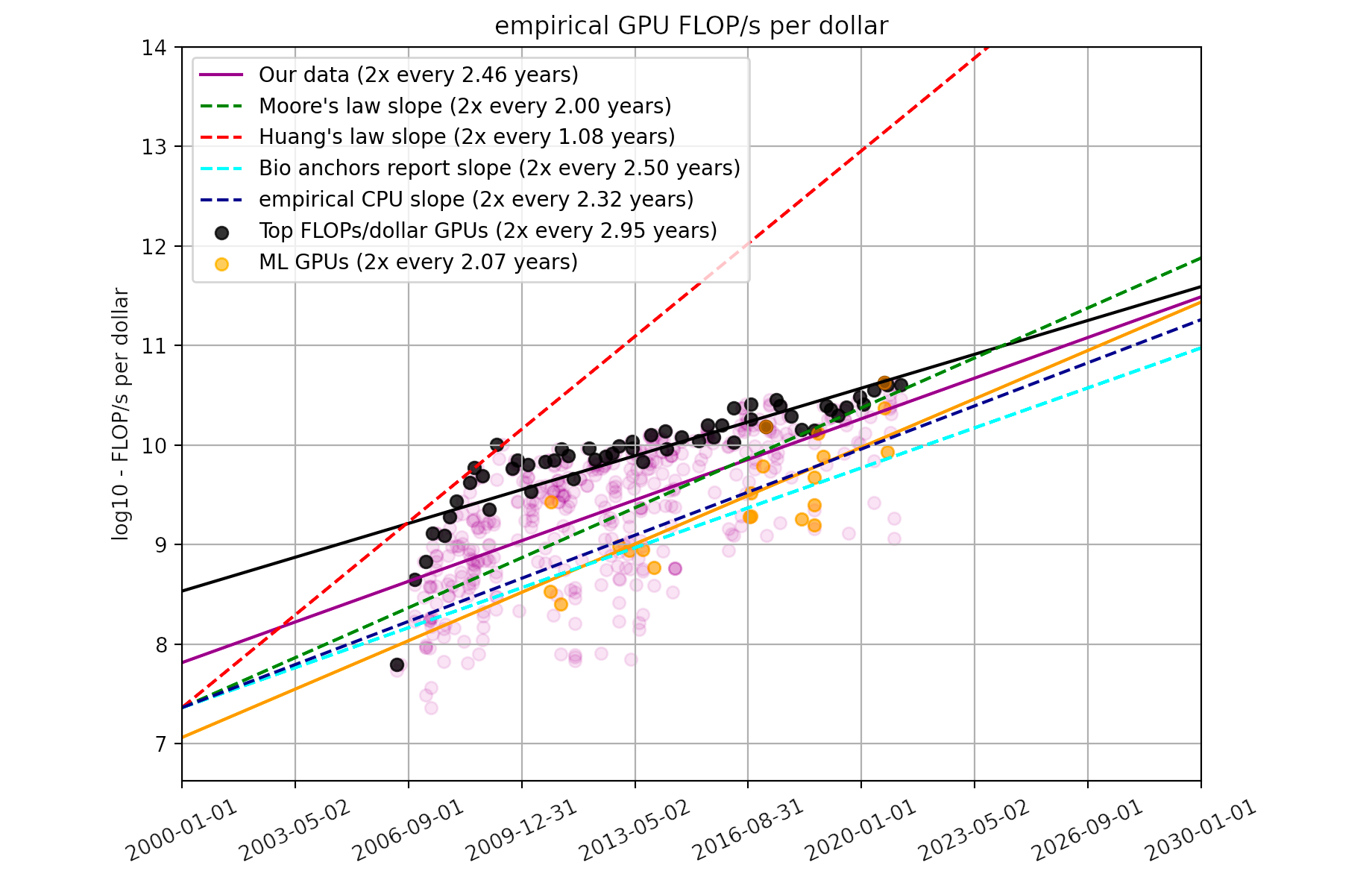

A similar concept was adopted for GPU (graphics processing unit) development, termed, “Huang’s Law” after Nvidia’s current CEO, Jensen Huang. This statement poses that GPU performance, measured in flops per dollar, increases more rapidly than that of the CPU chips originally described by Moore’s Law.

Marius Hobbhahn and Tamay Besiroglu, Epoch.ai

Marius Hobbhahn and Tamay Besiroglu, Epoch.ai

This might mean that it becomes cheaper every year to produce the same chip. But semiconductor fabrication plants become increasingly sophisticated, chips become denser, and the industry innovates over last year’s process and design. The flops increase and so do the dollars.

Compute Quality Value Gap asserts that the continuously increasing performance of compute necessitates increasing utility. Compute yield increases with transistor density and power. Investment in fabricating elegant computing architectures on silicon wafers also rises. This interaction compels the computing industry to capture value at a pace that matches growing compute efficiency. That task falls on software because it gives the electrons dancing across the 200 billion transistors in a modern chip one thing: a purpose, which is the key to ascribing value that keeps the cycle of compute innovation moving forward.

Computation’s Value Proposition

Conventionally a GPU had more ALU (arithmetic logic unit) density than an ordinary CPU, lending it to the mathematically demanding tasks of graphical rendering. The GPU was sold at a premium and became the central component in gaming computers. Gaming is a consumer product that benefits from computing resources by delivering entertaining, interactive worlds. The industry today accrues an estimated $187 billion in revenue. However, the value of “graphics cards” grew beyond gaming applications in search of more verticals with greater capitalization.

Cloud computing offered a new, stable model to capture value from compute. A hyperscaler could spin up massive arrays of compute in sprawling data centers and lease out the resources with a high-margin time-sharing model. The data center operators could keep upgrading their hardware and upsell capacity to tenants. The entire system financializes compute, measured in cloud credits. Countless organizations pursued digital transformations with cloud adoption strategies. Adoption attracted more players to scale-up their cloud operations and put pressure on the margins and growth of cloud companies.

Other applications of compute have been less than stable. Bitcoin was created in 2009 as a decentralized monetary system. The backbone of bitcoin leverages asymmetric encryption to secure a distributed ledger of transactions, now known as a blockchain. The coins themselves are generated using hashing algorithms, a compute intensive mathematical process. Naturally, producers of bitcoin often relied on the GPU to solve this problem. As the speculative value of Bitcoin grew, so did the cost of hardware to manage it, reinforcing the value of high-performing hardware. Similar to cloud computing, Bitcoin is another monetization of computational processes. The price of it in dollars is volatile, prone to opulent rushes and followed by devastating crashes.

In a related venture, cryptocurrency technology experimented with “non-fungible tokens,” (NFT). This was a unique digital asset to represent ownership of a piece of data. The most common application of NFT was to bind an image to a crypto token, and then to trade the token as a collectible. Although it may sound dubious now, at its height, a picture of an unamused ape owned by Justin Bieber was at one time valued at $1.3 million, which now has a top bidding offer of $11 thousand. The NFT boom didn’t directly monetize compute like the proof-of-work algorithm did; it attempted to capitalize data itself.

Bored Ape Yacht Club #3001, OpenSea.io

Soon after the demise of NFT, another graphically intensive application entered the hype cycle, Virtual Reality. VR uses mathematically derived techniques to project a realistic view of a virtual world. Its highly immersive experience seemed like a vehicle for enormous value capture, judging by the estimated $73 billion Meta invested building VR product, Horizon Worlds. Recently, Meta announced they are shutting down the project, to the surprise of no one. In a similar farce, Apple launched a $3500 VR headset that saw dismal sales, frequent returns, and scaled back production orders. For a moment, spatial computing seemed like the next big thing until it wasn’t.

The premise is familiar. Exciting technology is unlocked through increasing compute sophistication, intensified investment, and ingenuity. Innovation collides with reality when these inventions seek validation in the marketplace with mixed results. If Moore’s Law continues forever, will compute-based products infinitely improve?

Compute Quality Ladder

In my theory of Compute Quality Value Gap, computers keep getting better, and it creates a pressure on the tech sector to figure out how to escalate consumer value that matches the increase. If an organization produces laptops, and each year their products gets more performant, then customers may upgrade over time to enjoy a better experience. However, there’s a natural limit to this for most people. When even the most compute intensive app operates with crisp efficiency, why would they want to pay $2000 for the next version? The same concept applies to fashionable tech trends. Entire new product categories are erected to commodify an endless stream of hardware innovation.

The quality ladder model expects innovations to continuously occur that capture value and disrupt previous inventions. The Nvidia Blackwell architecture “smushes” two chips together as a single GPU, and it renders older GPU performance benchmarks obsolete. Next year’s chip will find a way to displace Blackwell. This model is profit-seeking, so if there are diminishing returns to innovation, then growth will slow and monopolistic actors will even obstruct development to avoid disruption. In my theory, the hardware innovations actually lead the software innovations.

AI hype is a retrofit use-case to justify the economy of GPU and ASIC sales. The quality ladder for hardware can only be climbed by finding an application to consume resources, whether it’s cloud compute, the blockchain, virtual reality, or a large language model. The persistent hype cycle is an effort to close the gap between the compute quality improvement and consumer value to validate the improvement.

Compute itself is not the thing. AI is only the thing for right now, and there will be more things in the near future. Consider any emerging technology that requires a lot of math and that would benefit from doing math faster. This will likely be the next cycle for compute to scale in search of self-validation. I believe that a clear successor to the LLM wave lies in robotics, autonomous vehicles, and genetic engineering. That is not to say that the LLM will disappear. It will continue to be used where it adds value greater than its actual costs, some of which I will recommend in another post.

New hype cycles will draw our growing stockpiles of compute other directions and keep the evolution of hardware moving forward. The Compute Quality Value Gap is not about building towards any particular thing; it is a depth-first search for validation of increasing compute efficiency. The solution to this value seeking function is you. Firms on the frontlines of the value-chain must determine what maths you want to pay for. Entire new categories of the software industry are created to test various hypotheses about whether you would directly or indirectly vote for this iteration of compute applications with your wallet. Some evolve to become self sustaining businesses while others wash ashore quietly, delivering small boring value that no longer tips the market.

I expect this cycle to continue as it has since the discovery of fire in a damp cave. I also wonder if some natural limit exists for computation that can ultimately be consumed downstream, or if this system approaches infinity where life becomes a simulation of itself.